Storage infrastructure is a long-term investment that must balance performance, scalability, and cost. As organizations generate and retain more data each year, storage environments must grow accordingly. Without careful planning, storage costs can quickly escalate due to expanding capacity requirements, infrastructure refresh cycles, and operational overhead.

Many organizations address this challenge by developing multi-year storage lifecycle plans that align infrastructure investments with projected data growth and technology refresh timelines. A structured lifecycle plan allows IT leaders to forecast capacity requirements, optimize procurement strategies, and ensure storage infrastructure remains capable of supporting evolving workloads as part of a broader federal storage architecture strategy.

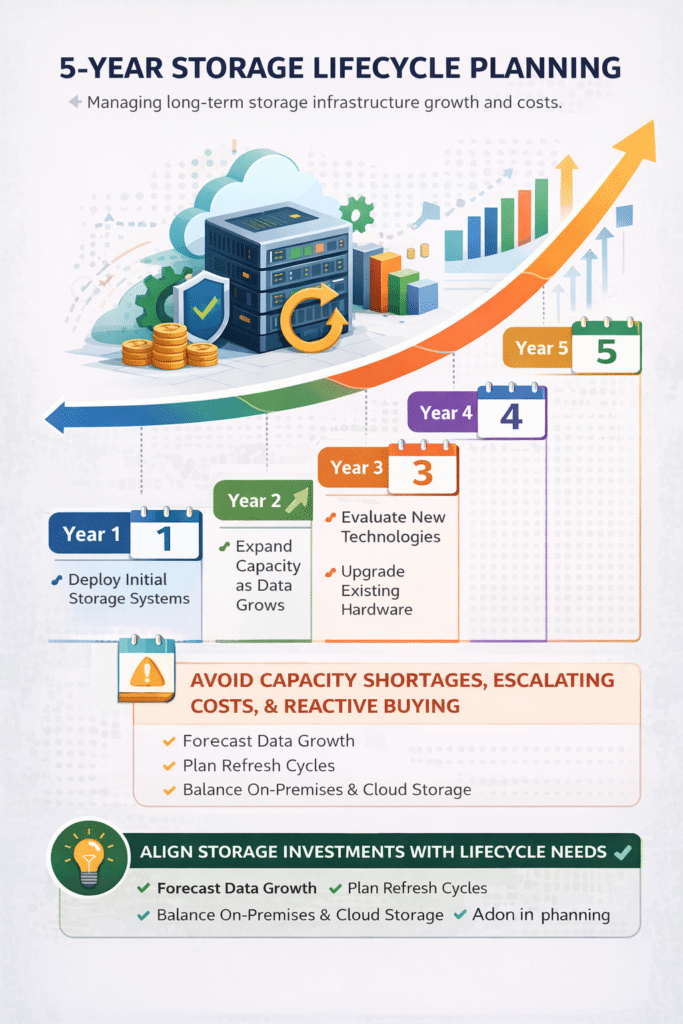

A five-year planning horizon is commonly used because it aligns with the typical lifecycle of enterprise storage systems. By planning storage infrastructure over this period, organizations can anticipate technology upgrades, control long-term costs, and avoid reactive purchasing decisions driven by unexpected capacity shortages.

Many organizations underestimate how quickly storage costs and infrastructure complexity can grow without a structured lifecycle plan. As data volumes expand and new analytics, AI, and cybersecurity systems generate additional datasets, storage environments can quickly become fragmented or inefficient. Without proactive planning, organizations may face unexpected capacity shortages, rising operational costs, and infrastructure limitations that slow down mission-critical workloads. A structured storage lifecycle strategy helps organizations anticipate these challenges and make informed infrastructure investments.

Storage lifecycle planning is the process of forecasting storage capacity, infrastructure investments, and operational costs over a multi-year period. A five-year storage lifecycle plan helps organizations align storage architecture, procurement decisions, and data growth projections to ensure storage environments remain scalable, cost-efficient, and capable of supporting future workloads.

Effective storage planning begins with understanding how data volumes are expected to grow. Nearly every organization is experiencing significant data expansion driven by new applications, analytics platforms, and regulatory retention requirements.

Common sources of data growth include:

As organizations adopt advanced analytics and AI-driven systems, data growth can accelerate even further. Storage lifecycle planning must therefore incorporate realistic projections of future data volume.

By forecasting how data will grow over time, organizations can estimate when storage capacity upgrades will be required and avoid unexpected infrastructure shortages.

Storage costs extend far beyond the initial purchase of hardware. A complete lifecycle plan must consider the total cost of ownership (TCO) associated with storage infrastructure.

Key cost factors include:

Operational expenses can represent a significant portion of long-term storage costs. Energy consumption, maintenance contracts, and administrative workloads all contribute to the total cost of maintaining storage systems.

Understanding these cost drivers allows organizations to evaluate different architecture options and determine which storage strategies deliver the best long-term value.

That starts with understanding the major storage cost drivers.

Organizations often focus on the upfront cost of storage hardware, but the cost of poor storage architecture can be far greater over time. When storage environments are not designed with lifecycle planning in mind, organizations may experience operational inefficiencies, unexpected infrastructure upgrades, and performance bottlenecks that affect critical workloads.

One common issue is overprovisioning expensive storage tiers. Without proper tiered storage strategies, organizations may store archival data or backups on high-performance infrastructure intended for mission-critical applications. This can dramatically increase storage costs while providing little operational benefit.

Poor storage architecture can also lead to compute inefficiencies. If storage systems cannot deliver sufficient throughput or capacity, applications and analytics workloads may run more slowly. In environments that rely on expensive compute infrastructure—such as GPU clusters used for AI workloads—storage bottlenecks can leave costly resources underutilized.

Another hidden cost involves reactive infrastructure purchases. When organizations fail to forecast storage growth, they may be forced to make emergency capacity purchases or rapid infrastructure upgrades. These reactive decisions often lead to higher procurement costs and rushed deployments that may not align with long-term architecture goals.

Operational complexity is another major factor. Storage environments that lack automation, lifecycle policies, or centralized management tools can require significant administrative effort to maintain. Over time, this increased operational burden can translate into higher staffing requirements and increased risk of configuration errors.

By contrast, organizations that design storage architectures around long-term lifecycle planning can avoid these hidden costs. A well-structured storage strategy ensures that infrastructure investments align with workload requirements, performance needs, and long-term data growth.

This also depends on sound data governance.

One of the most effective ways to control storage costs is through tiered storage architecture. Not all data requires the same level of performance, so storing every dataset on high-performance infrastructure can be unnecessarily expensive.

Tiered storage strategies place data on different storage platforms depending on access frequency, performance requirements, and retention policies.

For example:

By aligning storage performance with actual workload requirements, organizations can significantly reduce infrastructure costs while maintaining performance where it matters most.

Cloud tiering strategies can support this approach.

Accurate capacity forecasting is a critical component of storage lifecycle planning. Organizations must estimate how quickly storage capacity will grow and determine when expansions will be required.

Capacity forecasting typically includes:

Storage architects often build capacity models that project data growth over several years. These models help determine when additional storage nodes or storage tiers will be required.

Proactive forecasting ensures infrastructure investments occur before storage capacity becomes constrained.

A five-year storage lifecycle plan depends on having a reliable estimate of how storage demand will grow over time. Capacity forecasting allows organizations to anticipate infrastructure requirements before shortages occur and helps align storage investments with budget planning cycles.

The first step in building a storage forecast is analyzing historical data growth trends. Many organizations track storage consumption across their infrastructure platforms, allowing architects to estimate annual growth rates. While historical growth does not always predict future demand perfectly, it provides a useful baseline for capacity planning.

Next, organizations should evaluate planned technology initiatives that may increase storage demand. New analytics platforms, machine learning systems, security monitoring tools, and regulatory data retention requirements can all significantly increase storage usage. Incorporating these projects into capacity forecasts helps ensure infrastructure can support future workloads.

Storage architects often develop multi-year capacity models that estimate how storage demand will grow over time. These models may project total storage capacity requirements for each year of the lifecycle plan while also estimating how data will be distributed across storage tiers.

For example, organizations may forecast growth across several categories:

These forecasts allow organizations to determine when capacity expansions will be required and help align procurement decisions with long-term infrastructure planning.

Capacity forecasting is not a one-time exercise. Organizations should revisit these models regularly as new applications, data sources, and operational requirements emerge. Continuous forecasting helps ensure that storage infrastructure evolves alongside organizational needs while avoiding sudden capacity shortages or emergency infrastructure purchases.

Enterprise storage infrastructure typically follows five-year refresh cycles. Over time, hardware components age, maintenance costs increase, and new technologies become available that offer improved performance and efficiency.

Planning for refresh cycles allows organizations to replace aging infrastructure before reliability issues arise. Modern storage platforms may also deliver improved density and energy efficiency, reducing long-term operational costs.

A five-year lifecycle plan typically includes:

Planning these transitions in advance allows organizations to avoid sudden large capital expenditures and ensures storage infrastructure evolves alongside technology improvements.

Many organizations now incorporate cloud storage platforms into their storage lifecycle planning. Cloud services offer flexible capacity that can expand as needed, reducing the need for large upfront infrastructure purchases.

However, cloud storage also introduces new cost considerations such as:

Hybrid storage architectures that combine on-premises infrastructure with cloud storage often provide the most flexibility. Organizations can keep performance-sensitive workloads on local infrastructure while using cloud platforms for backups, archival data, or analytics datasets.

Balancing these environments allows organizations to optimize costs while maintaining performance and control over critical workloads.

Operational efficiency plays an important role in controlling long-term storage costs. Storage environments that require extensive manual administration can increase staffing requirements and operational complexity.

Automation tools can help reduce administrative overhead by managing tasks such as:

Automated storage management tools allow IT teams to operate larger storage environments without significantly increasing operational costs.

Storage lifecycle planning should not occur in isolation. Instead, it should align with broader organizational strategies related to analytics, cybersecurity, and digital transformation initiatives.

New applications, AI projects, or compliance requirements can significantly impact storage demand. By coordinating storage planning with broader IT strategy, organizations can ensure that infrastructure investments support future innovation.

Effective lifecycle planning ensures that storage systems remain capable of supporting emerging workloads without requiring frequent infrastructure redesign.

Data will continue to grow as organizations collect information from more applications, devices, and analytics platforms. Without a structured lifecycle plan, storage environments can quickly become expensive and difficult to manage.

By forecasting data growth, implementing tiered storage strategies, planning hardware refresh cycles, and balancing on-premises and cloud infrastructure, organizations can build storage architectures that remain cost-efficient over time.

A well-designed five-year storage lifecycle plan provides a roadmap for scaling infrastructure while maintaining control over costs and operational complexity.

Storage modernization is a critical component of enterprise IT transformation across federal agencies. By aligning architecture, cybersecurity, lifecycle planning, and procurement strategy, agencies can build storage environments capable of supporting mission-critical workloads well into the future.

For agencies beginning their modernization journey, a structured evaluation of architecture requirements, security posture, and lifecycle planning can help identify the most effective path forward.

Explore more storage architecture strategies in our storage resource hub.

READY TO TALK THROUGH YOUR STORAGE ENVIRONMENT?

Wildflower Solutions Architects are here to help with every step

From architecture to acquisition, our team of storage experts can help you align your environment with mission needs, compliance requirements, and future growth. Wildflower Solutions Architects are here to help with every step.

Notifications