As federal agencies modernize their IT infrastructure, storage architecture has become a critical design decision. Data volumes are expanding rapidly as agencies collect information from mission systems, research programs, operational platforms, and increasingly from analytics and AI-driven workloads. At the same time, agencies must protect sensitive data from cyber threats while ensuring that information remains accessible to users and applications across distributed environments.

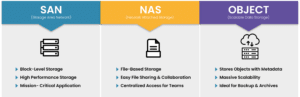

Overview: SAN, NAS, and object storage are three primary storage architectures used in modern IT environments. SAN (Storage Area Network) provides block-level storage designed for high-performance applications like databases and virtualization. NAS (Network Attached Storage) delivers file-based storage for shared access across users and systems. Object storage stores data as scalable objects with metadata, making it ideal for massive datasets, backups, and cloud environments.

Although SAN, NAS, and object storage all provide ways to store and access data, they operate very differently under the hood.

SAN storage delivers block-level storage that appears to servers as locally attached disks. Applications can read and write directly to these storage blocks, allowing extremely fast and predictable performance. SAN environments typically rely on high-speed network technologies such as Fibre Channel or high-performance Ethernet to deliver low latency and high throughput.

NAS storage provides file-based storage accessible over standard network protocols such as NFS or SMB. Instead of interacting with raw storage blocks, users and applications access files organized within di

rectories. NAS systems function much like centralized file servers but are designed for large-scale enterprise environments.

Object storage takes a fundamentally different approach. Instead of organizing data into files or blocks, object storage stores data as objects within a flat namespace. Each object contains the data itself along with metadata and a unique identifier, allowing the system to scale to enormous data volumes.

Each architecture solves different problems, which is why modern environments often incorporate more than one storage model.

This matters especially in AI storage architecture.

SAN storage is typically used for mission-critical applications that require consistent performance and low latency. Because SAN operates at the block level, it allows operating systems and applications to control how data is structured and accessed, which is particularly important for databases and transactional systems.

Common SAN workloads include:

For example, a financial management system or mission operations platform may require extremely reliable and consistent storage performance. SAN environments allow administrators to tightly control performance characteristics and ensure applications receive the resources they need.

However, SAN systems often require specialized infrastructure and management expertise. Fibre Channel networks, zoning configurations, and block-level storage provisioning can make SAN environments more complex than other storage approaches.

NAS storage is designed primarily for shared file access across multiple users and systems. Because it operates at the file level, NAS platforms provide familiar directory structures and file permissions that make them easy to integrate with existing workflows.

Common NAS use cases include:

NAS systems are often easier to deploy and manage than SAN environments because they operate using standard network protocols and do not require specialized block storage configuration.

In federal environments, NAS platforms frequently support collaborative work environments where multiple teams must access shared datasets. They are also commonly used for analytics workloads that process large volumes of files rather than transactional database operations.

However, NAS may not deliver the same level of performance consistency required for some mission-critical applications. File-based protocols introduce additional overhead compared to block storage, which can affect latency-sensitive workloads.

Object storage has become increasingly important as organizations manage massive volumes of unstructured data. Unlike SAN or NAS systems, object storage platforms are designed to scale horizontally across many storage nodes, allowing them to store billions of objects without the limitations of traditional file systems.

Object storage is particularly well suited for:

One of the key advantages of object storage is its ability to store extensive metadata alongside each object. This metadata makes it easier to search, categorize, and analyze data at scale, which is especially useful for analytics and AI applications.

Object storage is also highly durable. Many platforms replicate objects across multiple storage nodes to ensure that data remains accessible even if hardware components fail.

The trade-off is that object storage generally does not provide the low-latency performance required for traditional transactional workloads. Instead, it is optimized for scalability and durability rather than immediate data access.

Most modern storage environments do not rely on a single architecture. Instead, organizations implement hybrid storage architectures that combine SAN, NAS, and object storage platforms to support different types of workloads.

For example:

By matching each storage platform to the workloads it supports best, agencies can balance performance, scalability, and cost efficiency.

Hybrid architectures also integrate more easily with cloud storage services, where object storage models dominate. Many agencies use on-premises SAN or NAS infrastructure for operational workloads while leveraging cloud object storage for disaster recovery or archival storage.

These models often come together in a hybrid storage architecture.

Regardless of the storage model chosen, security must remain a top priority. Storage systems increasingly serve as targets for cyberattacks, particularly ransomware campaigns that attempt to encrypt or destroy critical data.

Modern storage architectures should include:

Many modern storage platforms now integrate cybersecurity features directly into the storage layer, helping organizations detect threats earlier and recover more quickly from incidents.

Selecting the right storage architecture ultimately depends on workload requirements, data growth expectations, and operational priorities. Organizations should evaluate several factors when making this decision:

In most cases, the best approach is not choosing one storage model over another but designing a storage architecture that leverages the strengths of each technology.

As federal agencies continue to modernize their infrastructure, storage architecture will play an increasingly important role in enabling mission success. Data-driven decision-making, artificial intelligence, and distributed mission systems all rely on storage platforms that are scalable, resilient, and secure.

By understanding the differences between SAN, NAS, and object storage—and how each supports different types of workloads—IT leaders can design storage environments that meet today’s needs while remaining flexible enough to support the next generation of data-driven government operations.

Explore more storage architecture strategies in our storage resource hub.

Wildflower Solutions Architects are here to help with every step

From architecture to acquisition, our team of storage experts can help you align your environment with mission needs, compliance requirements, and future growth. Wildflower Solutions Architects are here to help with every step.

Hybrid storage architectures use multiple storage models together, allowing organizations to match each workload with the storage platform that best supports its performance, scalability, and access requirements. In many environments, SAN storage is used for high-performance applications such as databases and virtualization platforms that require low latency and consistent throughput. NAS storage provides shared file access for collaborative environments, research datasets, and analytics workflows that operate on file-based data. Object storage is often used for large-scale data repositories, backups, archives, and cloud-integrated storage because it can scale to store massive volumes of unstructured data.

By combining these architectures, agencies can create a tiered storage strategy that balances performance and cost. Mission-critical applications run on high-performance SAN systems, shared data environments use NAS platforms, and large datasets or long-term storage needs are handled by object storage systems. This hybrid approach also makes it easier to integrate with cloud storage services and scale storage capacity as data volumes grow.

AI and analytics workloads typically require high throughput, parallel data access, and the ability to manage extremely large datasets, which means the best storage architecture often depends on the specific stage of the data pipeline. High-performance NAS systems are commonly used to support active AI training workloads because they allow multiple compute nodes to access shared datasets simultaneously. In some environments, SAN storage may support the underlying infrastructure for high-performance compute clusters or virtualization platforms running analytics tools.

For large-scale data repositories and data lakes, object storage is often the preferred architecture because it can store massive volumes of unstructured data and attach metadata that helps organize and analyze datasets. Many organizations therefore use a combination of NAS and object storage for AI environments—NAS for high-performance training and active processing, and object storage for scalable data lakes and long-term dataset storage. This hybrid approach allows agencies to support advanced analytics while controlling infrastructure costs as data volumes continue to grow.

Notifications